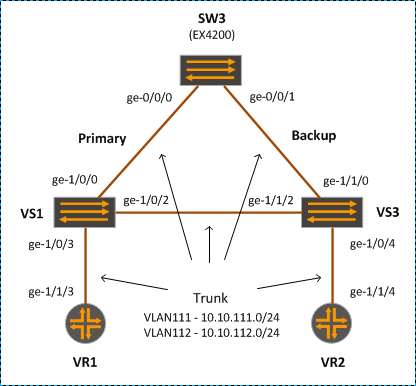

In this post, we will demonstrate load balancing VPLS traffic using multiple LSP tunnels.

Topology

Configuration

[edit]

lab@PE1#

interfaces {

ge-1/0/7 {

description "LINK - PE2 ge-1/0/7";

unit 0 {

family inet {

address 10.10.101.1/24;

}

family mpls;

}

}

ge-1/1/7 {

description "LINK - PE2 ge-1/1/7";

unit 0 {

family inet {

address 10.10.102.1/24;

}

family mpls;

}

}

ge-1/0/6 {

vlan-tagging;

encapsulation flexible-ethernet-services;

/* VLPS Vlan */

unit 600 {

description "vpls interface to SW1";

encapsulation vlan-vpls;

vlan-id 600;

family vpls;

}

}

}

protocols {

rsvp {

load-balance bandwidth;

interface all;

}

mpls {

label-switched-path PE1-to-PE2-LSP1 {

to 10.1.1.22;

bandwidth 200m;

no-cspf;

primary via-Ge1;

}

label-switched-path PE1-to-PE2-LSP2 {

to 10.1.1.22;

bandwidth 200m;

no-cspf;

primary via-Ge2;

}

path via-Ge1 {

10.10.101.2;

}

path via-Ge2 {

10.10.102.2;

}

interface ge-1/0/7.0;

interface ge-1/1/7.0;

}

bgp {

local-as 65000;

group PEs {

type internal;

local-address 10.1.1.11;

family inet {

unicast;

}

family inet-vpn {

unicast;

}

family l2vpn {

signaling;

}

neighbor 10.1.1.22;

}

}

ospf {

traffic-engineering;

area 0.0.0.0 {

interface ge-1/0/7.0 {

interface-type p2p;

}

interface ge-1/1/7.0 {

interface-type p2p;

}

interface lo0.0;

}

}

}

routing-instances {

VPLS-1 {

instance-type vpls;

interface ge-1/0/6.600;

route-distinguisher 10.1.1.11:11;

vrf-target target:65000:100;

protocols {

vpls {

site-range 10;

site Site1 {

site-identifier 1;

}

}

}

}

}

policy-options {

policy-statement per-flow-load-balance {

then {

load-balance per-packet;

}

}

}

routing-options {

forwarding-table {

export per-flow-load-balance;

}

}

Verification

Behaviour on different Junos version may be different. Note that on MX5, the load-balancing is supported under forwarding-options enhanced-hash-key configuration, rather than hash-key. In fact, we need to remove all the hash-key config, otherwise, the behaviour is not expected. By default load-balancing is already supported on MX5.

The following command is useful to confirm the hash key configuration:

lab@PE1> request pfe execute command "show jnh lb" target tfeb0

SENT: Ukern command: show jnh lb

GOT:

GOT: Unilist Seed Configured 0x8bce4c39 System Mac address 00:00:00:00:00:00

GOT: Hash Key Configuration: 0x0000000000e00000 0xffffffffffffffff

GOT: IIF-V4: No

GOT: SPORT-V4: Yes

GOT: DPORT-V4: Yes

GOT: TOS: No

GOT:

GOT: IIF-V6: No

GOT: SPORT-V6: Yes

GOT: DPORT-V6: Yes

GOT: TRAFFIC_CLASS: No

GOT:

GOT: IIF-MPLS: No

GOT: MPLS_PAYLOAD: Yes

GOT: MPLS_EXP: No

GOT:

GOT: IIF-BRIDGED: No

GOT: MAC ADDRESSES: Yes

GOT: ETHER_PAYLOAD: Yes

GOT: 802.1P OUTER: No

GOT:

GOT: Services Hash Key Configuration:

GOT: SADDR-V4: No

GOT: IIF-V4: No

GOT:

LOCAL: End of file

lab@PE1> show mpls lsp statistics

Ingress LSP: 3 sessions

To From State Packets Bytes LSPname

10.1.1.22 10.1.1.11 Up 1284 130968 PE1-to-PE2-LSP1

10.1.1.22 10.1.1.11 Up 1208 123216 PE1-to-PE2-LSP2

Total 3 displayed, Up 3, Down 0

Egress LSP: 3 sessions

To From State Packets Bytes LSPname

10.1.1.11 10.1.1.22 Up NA NA PE2-to-PE1-LSP1

10.1.1.11 10.1.1.22 Up NA NA PE2-to-PE1-LSP2

Total 3 displayed, Up 3, Down 0

Transit LSP: 0 sessions

Total 0 displayed, Up 0, Down 0

lab@PE1> monitor label-switched-path PE1-to-PE2-LSP2

To 10.1.1.22, From 10.1.1.11, state: Up

LSPname: PE1-to-PE2-LSP2, type: Ingress

Label in: -, Label out: 3

Port number: sender 4, receiver 7368, protocol 0

Record Route: 10.10.102.2

Traffic statistics: pps/bps

Output packets: 38813 0

Output bytes: 3958926 0